The Business Case for Moving Off Alteryx Server

Alteryx Server has served as the scheduling and governance backbone for many enterprise analytics teams. But as organizations scale their data operations and adopt cloud-first strategies, the limitations of Alteryx Server become increasingly difficult to ignore.

The most common drivers for migration include:

- Licensing costs: Alteryx Server licensing is per-core, and Designer licenses run approximately $5,000–$6,000 per user per year. For a team of 50 analysts, annual licensing alone can exceed $300,000—before Server infrastructure costs.

- Scalability limits: Alteryx Server scales vertically, not horizontally. Processing a 500 GB dataset requires provisioning a single large machine rather than distributing work across a cluster. This creates bottlenecks that cloud-native platforms eliminate by design.

- Vendor lock-in: Workflows encoded in

.yxmdformat, macros written in Alteryx formula language, and analytic apps bound to the Alteryx Gallery create deep platform dependency. The longer an organization waits, the more expensive extraction becomes. - Talent availability: Python and SQL developers outnumber Alteryx specialists by orders of magnitude. Cloud-native pipelines written in PySpark or dbt attract a broader talent pool and reduce hiring risk.

- Cloud economics: Pay-per-use compute on Databricks, Snowflake, or AWS Glue often costs a fraction of always-on Alteryx Server infrastructure, especially for batch workloads that run for minutes but reserve capacity 24/7.

A Fortune 500 financial services firm reduced its annual data platform costs by 62% after migrating 400+ Alteryx workflows to PySpark on Databricks—while improving average job execution time by 3.5x through distributed compute.

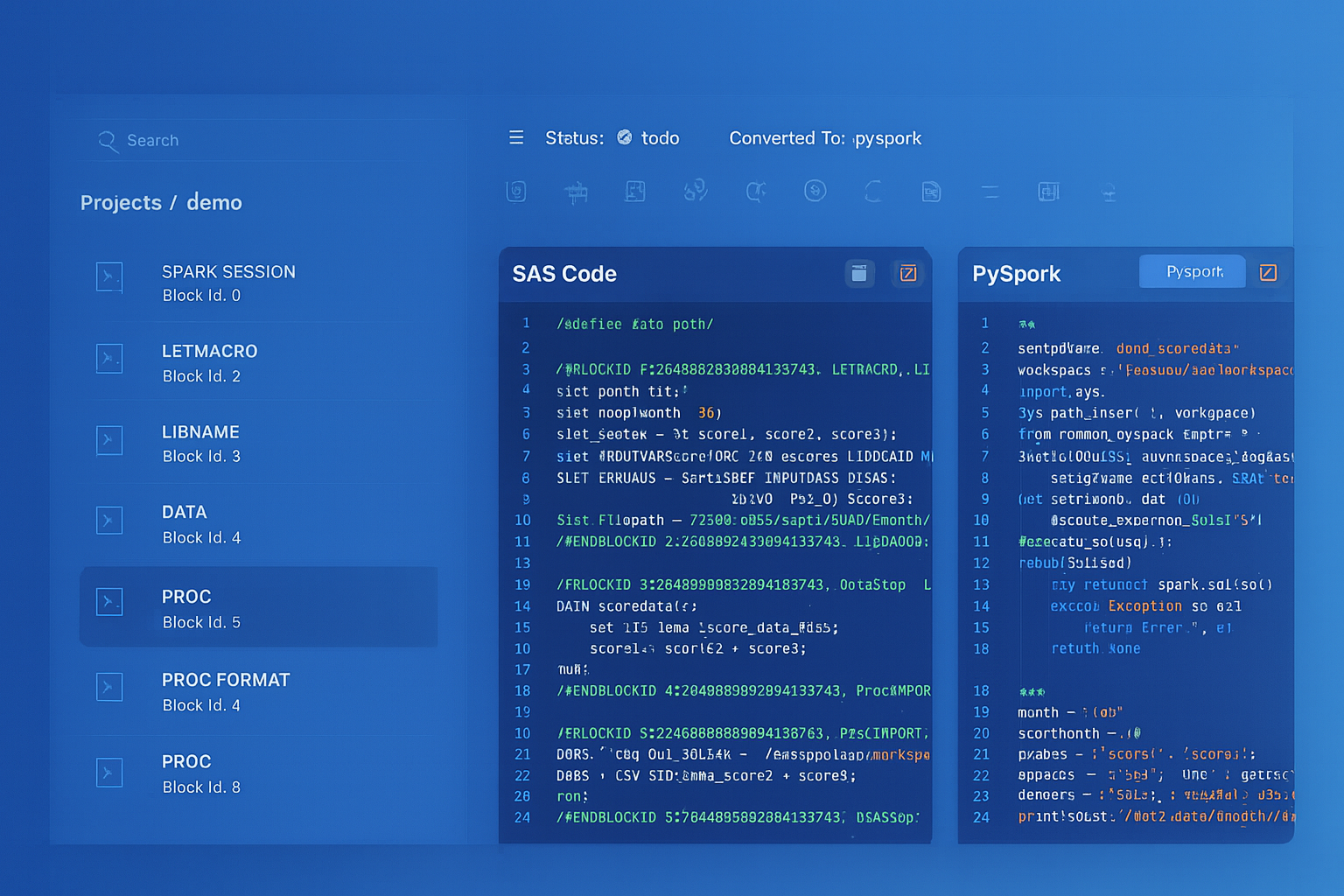

Alteryx to Databricks migration — automated end-to-end by MigryX

Target Architecture Options

There is no single “right” target for Alteryx migration. The best choice depends on your data volumes, team skills, existing cloud contracts, and governance requirements. Here are the three most common landing zones:

Option 1: Databricks (PySpark + Delta Lake)

Best for organizations processing large-scale data (100 GB+) that need distributed compute, ML integration, and lakehouse architecture. Alteryx workflows map naturally to PySpark DataFrames, and Databricks Workflows replaces Alteryx Server scheduling. Delta Lake provides ACID transactions that Alteryx file-based outputs lack.

Option 2: Snowflake (Snowpark + SQL)

Best for teams with strong SQL skills and data already resident in Snowflake. Snowpark Python enables DataFrame-style transformations that execute natively in Snowflake’s compute layer, eliminating data movement. Snowflake Tasks and Streams replace Alteryx Server’s scheduling and event-driven triggers.

Option 3: Pure Python on Kubernetes

Best for organizations that want maximum portability and already operate Kubernetes clusters. Workflows convert to standard Python scripts using pandas or Polars, packaged as Docker containers, and orchestrated by Airflow, Prefect, or Dagster. This option avoids any new platform lock-in but requires more operational maturity.

| Criteria | Databricks | Snowflake | Python + K8s |

|---|---|---|---|

| Data scale | 100 GB–PB | 10 GB–TB | 1 GB–100 GB |

| Primary language | PySpark, SQL | SQL, Snowpark | Python (pandas/Polars) |

| Scheduling | Databricks Workflows | Snowflake Tasks | Airflow / Prefect |

| ML integration | MLflow built-in | Snowpark ML | BYO (MLflow, SageMaker) |

| Lock-in risk | Medium | Medium | Low |

| Operational overhead | Low | Low | High |

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

Migration Phases: A Structured Roadmap

Successful Alteryx migrations follow a phased approach that minimizes risk and builds organizational confidence incrementally. Here is the five-phase roadmap we recommend:

Phase 1: Discovery (Weeks 1–2)

Inventory every workflow, macro, analytic app, and data connection in your Alteryx environment. Catalog metadata: file paths, last-modified dates, scheduled frequency, Gallery usage statistics, and owner information. The goal is a complete picture of what exists and what is actually in use.

- Export the Alteryx Server API workflow list or scan shared drives for

.yxmd/.yxmc/.yxwzfiles - Identify dormant workflows (not executed in 6+ months) as candidates for retirement

- Flag workflows with external dependencies: database connections, API calls, file-share reads

Phase 2: Analysis (Weeks 2–4)

Parse each workflow to assess complexity, identify tool usage patterns, and map dependencies. Classify workflows into complexity tiers:

- Simple (Tier 1): Linear data flow, standard tools only, no macros. ~40% of typical portfolios.

- Moderate (Tier 2): Joins, filters, formulas with moderate expression complexity, standard macros. ~35% of portfolios.

- Complex (Tier 3): Iterative macros, spatial tools, SDK extensions, in-database processing, analytic apps. ~25% of portfolios.

Phase 3: Conversion (Weeks 4–10)

Convert workflows in priority order, starting with Tier 1 to build momentum and validate the toolchain. Automated conversion handles the bulk of Tier 1 and Tier 2 workflows. Tier 3 workflows require manual intervention guided by automated scaffolding.

Phase 4: Validation (Weeks 8–12, overlapping with conversion)

Run parallel execution: the original Alteryx workflow and the converted Python pipeline process the same input data. Compare outputs at the row and column level. Validation should be automated and produce a reconciliation report that business owners can review.

Phase 5: Deployment & Decommission (Weeks 10–14)

Deploy validated pipelines to the target platform, configure scheduling, set up monitoring and alerting, and decommission corresponding Alteryx Server schedules. Maintain a 2–4 week parallel-run period where both systems operate before fully retiring Alteryx.

MigryX: Automated Workflow Discovery & Dependency Mapping

MigryX accelerates Phases 1 and 2 by automatically scanning your Alteryx environment—whether files reside on shared drives or Alteryx Server—and producing a complete dependency map. Every macro reference, data connection, and cross-workflow dependency is identified, classified by complexity tier, and visualized in an interactive graph. This typically compresses a 4-week manual discovery effort into 2–3 days.

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Handling Alteryx-Specific Features

Certain Alteryx capabilities require special migration strategies because they lack direct equivalents in general-purpose Python:

Spatial Analytics

Alteryx includes built-in spatial tools (Spatial Match, Trade Area, Distance, Drive Time) powered by TomTom data. In the cloud, you can replace these with GeoPandas for vector operations, H3 for hexagonal spatial indexing, or PostGIS/BigQuery GIS for database-native spatial queries. Trade area and drive time analysis may require third-party APIs (Google Maps, Mapbox, ESRI).

Analytic Apps

Alteryx analytic apps present a GUI to end users via the Gallery. The cloud-native equivalent is a lightweight web application (Streamlit, Gradio, or a custom React front-end) backed by a Python API that executes the converted logic on demand.

SDK Tools & Custom Connectors

Organizations with custom C++ or Python SDK tools embedded in their workflows must extract the underlying logic and repackage it as standard Python modules. If the SDK tool wraps a proprietary API, the API calls can typically be replicated with the requests library or an official SDK.

Batch Macros for Dynamic Input

Batch macros that dynamically change input file paths or database tables are common in Alteryx. The Pythonic equivalent uses parameterized functions combined with configuration files (YAML/JSON) or environment variables to achieve the same dynamic behavior without visual macro overhead.

ROI Considerations and Timeline Expectations

Migration is an investment, and stakeholders need clear ROI projections. Based on industry benchmarks and our experience with enterprise migrations, here are realistic expectations:

| Metric | Before (Alteryx Server) | After (Cloud-Native) |

|---|---|---|

| Annual licensing | $200K–$500K | $50K–$150K (compute) |

| Infrastructure ops | 2–3 FTEs | 0.5–1 FTE (managed service) |

| Average job runtime | 45 min (single node) | 12 min (distributed) |

| Max data volume | ~200 GB (memory bound) | Petabyte-scale |

| Developer hiring pool | Narrow (Alteryx certified) | Broad (Python/SQL) |

Typical migration timelines for mid-sized portfolios (100–300 workflows):

- With automation (MigryX): 10–14 weeks end-to-end, including validation and parallel run

- Manual migration: 6–12 months, with higher risk of inconsistency and rework

The breakeven point for most organizations is 8–14 months post-migration, after which cumulative savings from reduced licensing and infrastructure costs exceed the migration investment.

The key to a successful Alteryx-to-cloud migration is treating it as a structured engineering project—not a one-time lift-and-shift. Phased execution, automated conversion, and rigorous validation ensure that the transition delivers lasting value without disrupting critical business processes.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo