The Customer Challenge

A Fortune 500 enterprise approached us with a familiar but complex request: migrate their entire Oracle ODI estate — hundreds of mappings, custom knowledge modules, and orchestrated load plans — to a modern stack: dbt for transformation logic, Spark SQL as the execution engine, and Apache Airflow for orchestration. The question: can MigryX automate this, and what level of automation is achievable?

This was not a theoretical exercise. The organization had over 400 ODI mappings in production, 25 custom Knowledge Modules developed over a decade, and 15 load plans that coordinated nightly, weekly, and monthly batch cycles across their data warehouse. Their Oracle license renewal was 10 months away, and they needed a credible migration plan — with realistic timelines and automation percentages — before committing to a new platform.

The target architecture was already decided: dbt for SQL-based transformation modeling, Spark SQL running on Databricks for distributed execution at scale, and Apache Airflow (managed via Amazon MWAA) for production orchestration. What they needed was confidence that the migration from ODI to this stack could be substantially automated, not hand-rewritten mapping by mapping over the course of a year.

We had 12 years of business logic embedded in ODI. Rewriting it manually was a non-starter — we needed automation that understood ODI’s architecture, not just its SQL.

Oracle ODI to dbt migration — automated end-to-end by MigryX

Why ODI to dbt + Spark SQL + Airflow?

The decision to move from ODI to dbt + Spark SQL + Airflow reflects a broader industry pattern: enterprises are decomposing monolithic ETL tools into best-of-breed components. Each piece of this target stack addresses a specific limitation of ODI.

Breaking Free from Oracle Lock-In

ODI is deeply coupled to the Oracle ecosystem. Its Knowledge Modules are optimized for Oracle Database, its runtime agents run on Oracle WebLogic, and its repository lives in an Oracle schema. This coupling means that even when organizations move their data warehouse to Snowflake or Databricks, they often keep ODI as the integration layer — paying Oracle licensing fees for a tool that is now pushing data into a non-Oracle target. The economics stop making sense.

dbt: SQL-First Transformations with Software Engineering Discipline

dbt brings version control, testing, documentation, and modularity to SQL transformations. Every transformation is a .sql file in a Git repository. Dependencies are declared through ref() and source() functions, enabling dbt to build a DAG automatically. Materializations (table, view, incremental, ephemeral) replace ODI’s IKM patterns with a cleaner, declarative approach. Schema tests replace CKM validation logic with .yml-defined assertions that run as part of every pipeline execution.

Spark SQL: Distributed Execution at Scale

Spark SQL provides the execution engine that dbt targets. Unlike ODI’s E-LT model — which pushes transformations into a single database engine — Spark SQL distributes computation across a cluster. This matters when transformation volumes grow beyond what a single database instance can handle efficiently. Spark SQL also supports a broad range of data sources (Parquet, Delta Lake, Iceberg, JDBC, S3, ADLS) without requiring source-specific Knowledge Modules.

Airflow: Industry-Standard Orchestration

Apache Airflow replaces ODI’s Package/Scenario/Load Plan orchestration with Python-defined DAGs. Airflow provides what ODI’s orchestration layer lacks: a rich ecosystem of operators (Spark, dbt, Kubernetes, S3, Slack, PagerDuty), dynamic DAG generation, robust retry and SLA mechanisms, and a web UI for monitoring and manual intervention. Critically, Airflow is open source with managed offerings from every major cloud provider (Amazon MWAA, Google Cloud Composer, Astronomer).

The Combined Value

Together, this stack gives data teams:

- Version-controlled SQL transformations — every change tracked in Git, peer-reviewed through pull requests, and deployed through CI/CD

- Distributed execution — Spark SQL scales horizontally for large data volumes without re-architecting transformations

- Production-grade scheduling — Airflow handles dependency management, retries, alerting, SLAs, and cross-DAG triggers

- Open-source foundation — no single-vendor lock-in, broad talent pool, and an active community driving continuous improvement

- Cost transparency — compute costs are visible and controllable (cluster sizing, spot instances, auto-scaling) rather than buried in Oracle license bundles

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

The Architecture: ODI to dbt + Spark SQL + Airflow

Mapping ODI’s architecture to dbt + Spark SQL + Airflow requires understanding both the conceptual alignment and the technical translation at each layer. The following breakdown covers every major ODI component and its target equivalent.

ODI Mappings to dbt Models

Each ODI Mapping (or Interface in ODI 11g) translates to a dbt model — a .sql file that uses SELECT statements with ref() for upstream model dependencies and source() for raw data references. ODI’s source-to-target column expressions become the SELECT list. Join conditions become JOIN clauses. Filter expressions become WHERE clauses. Aggregations, CASE logic, and derived columns map directly to SQL.

Knowledge Modules to dbt Materializations and Spark SQL DDL

ODI’s IKM patterns (insert, update, merge, incremental) map to dbt materializations. An IKM Oracle Incremental Update becomes a dbt incremental model with a merge strategy. An IKM SQL to SQL Append becomes a dbt table materialization. LKM patterns (loading from external sources to staging) translate to dbt source() definitions combined with staging models that clean and type-cast raw data. CKM validation logic translates to dbt schema tests (unique, not_null, accepted_values, relationships) and custom data tests.

Load Plans to Airflow DAGs

ODI Load Plans are hierarchical execution trees with serial steps, parallel steps, exception handling, and restart/skip logic. These translate to Airflow DAGs where serial steps become sequential task dependencies (>> operator), parallel steps become tasks within a TaskGroup that execute concurrently, and exception steps become trigger_rule configurations (e.g., one_failed). Load Plan variables map to Airflow Variables or XCom values passed between tasks.

Topology to Connection Configurations

ODI’s Topology (Data Servers, Physical Schemas, Logical Schemas, Contexts) translates to two configuration layers: dbt profiles.yml for Spark SQL connection settings (Thrift server endpoint, database, schema, threads) and Airflow Connections for external system access (JDBC endpoints, S3 buckets, API credentials). Context-based environment switching (DEV/QA/PROD) maps to dbt targets and Airflow environment variables.

Component Mapping Reference

The following table provides a comprehensive mapping of ODI components to their dbt and Airflow equivalents:

| ODI Component | dbt Equivalent | Airflow Equivalent |

|---|---|---|

| Mapping / Interface | dbt model (.sql file) |

— |

| IKM (Insert / Update / Merge) | Materialization (table / incremental) |

— |

| Load Plan | — | Airflow DAG |

| ODI Variable | dbt var() |

Airflow Variable / XCom |

| Scenario | dbt run |

Task execution |

MigryX handles the complete ODI component taxonomy — from Knowledge Modules to Load Plan orchestration — generating equivalent dbt and Airflow artifacts automatically.

Automation Levels Achieved

One of the first questions any enterprise asks about automated migration is: what percentage of the work can actually be automated? The answer depends on the complexity and customization level of your ODI estate, but the results are consistently strong.

MigryX achieves high automation rates across all ODI component types, with the majority of standard mappings and load plans converting automatically. Complex custom PL/SQL Knowledge Modules may require additional review, but MigryX still accelerates these significantly.

The areas that typically benefit from manual refinement include custom PL/SQL logic embedded in Knowledge Modules, Oracle-specific SQL functions and performance hints, complex error-handling patterns, and CDC/journalizing designs that require rethinking for the target platform. MigryX flags all of these during conversion so teams can focus their effort precisely where it matters most.

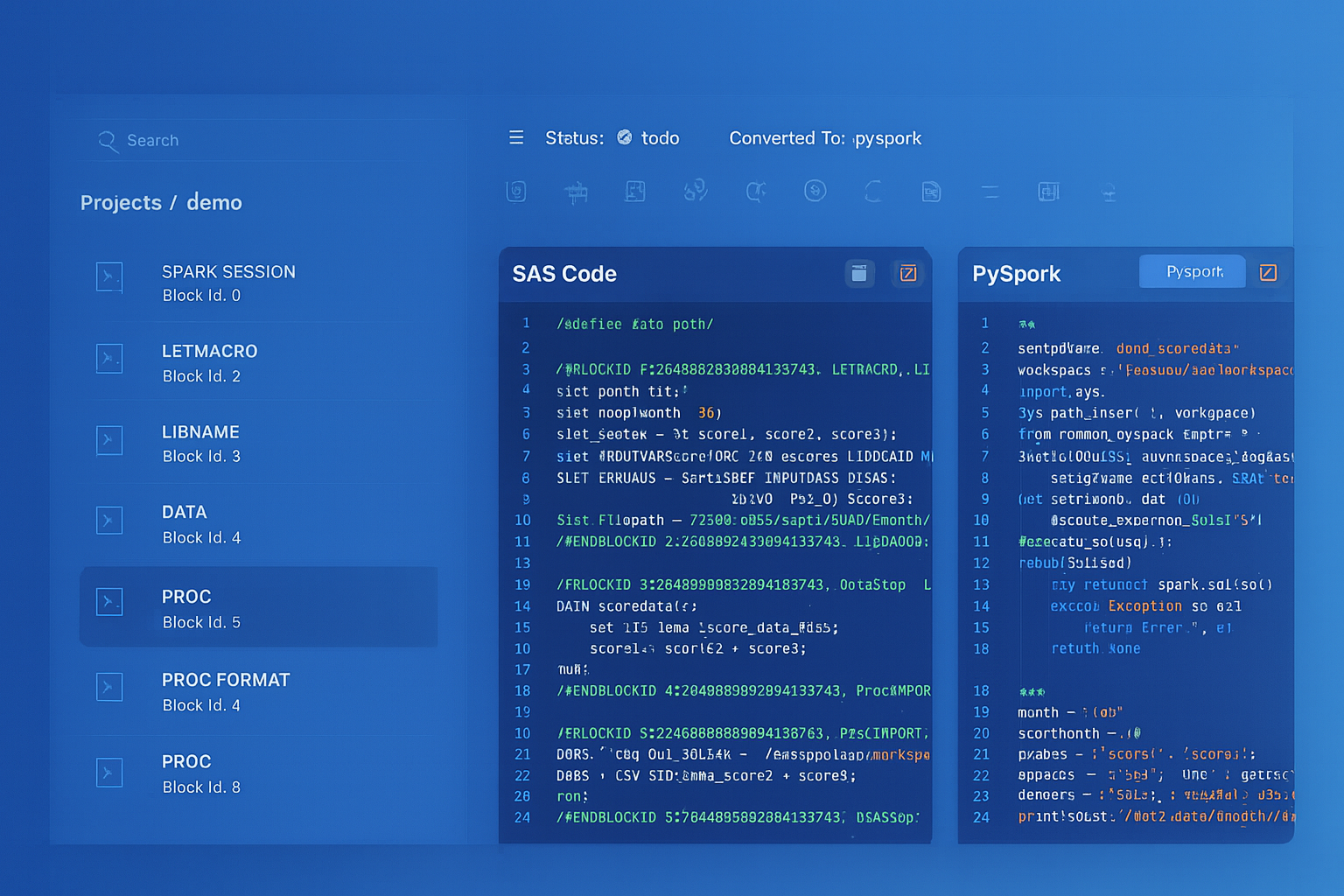

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Real Implementation: What We Delivered

The following is drawn from an actual customer engagement (details anonymized). This Fortune 500 organization operates in the financial services sector and had been running ODI for over 12 years.

Scope and Inventory

The ODI estate consisted of hundreds of mappings, dozens of custom KMs, multiple complex load plans, and extensive variable and procedure libraries — spanning nightly, weekly, and monthly batch cycles across their data warehouse. The mappings ranged from simple single-source loads to complex multi-source transformations, and many of the custom Knowledge Modules had been developed internally over the past decade.

Automated Conversion Results

MigryX generated a complete, production-ready dbt project with models, macros, tests, and documentation, alongside Airflow DAGs for orchestration — covering the full scope of the ODI environment. The dbt project was organized into proper layers matching the organization’s existing data warehouse zones, and column-level lineage was preserved throughout the conversion.

Conversion Quality

The automated conversion achieved strong results across all component categories, with the vast majority of artifacts converting fully automatically and requiring only standard review before deployment.

Parallel-Run Validation

The validation phase ran both pipelines — ODI on Oracle and dbt on Spark SQL — against the same source data for 4 consecutive weeks. Comparison was performed at three levels:

- Row counts: Full match across all target tables from day one

- Checksum validation: Checksums on concatenated row values matched for the vast majority of tables. Minor discrepancies were traced to floating-point precision differences between Oracle and Spark SQL types — resolved by standardizing precision in the dbt models

- Sample record comparison: Randomly sampled records per table compared column-by-column confirmed full equivalence after the precision adjustments

Timeline and Effort

The migration was completed in a fraction of the time that manual conversion would have required, with a significantly smaller team. The MigryX-assisted approach followed a structured cadence: automated conversion and ingestion, review and refinement of flagged items, Airflow environment setup, and parallel-run validation — all completed well within the customer’s Oracle license renewal deadline.

How MigryX Handles ODI to dbt + Spark SQL + Airflow

MigryX handled the complete ODI-to-dbt+Airflow conversion pipeline — from repository parsing through SQL dialect translation to orchestration generation — with built-in validation at every stage.

What the Generated Code Looks Like

MigryX generates production-ready incremental dbt models with proper merge strategies, source references, and business logic preservation. Each generated model includes inline documentation and follows dbt community best practices.

MigryX generates complete Airflow DAGs that mirror the original ODI Load Plan orchestration — including task groups, dependency chains, scheduling, and failure handling — ready for deployment to any Airflow environment.

Getting Started

Migrating your ODI estate to dbt + Spark SQL + Airflow follows a structured process. Here are the steps:

- Export your ODI repository: Use ODI Studio’s Smart Export to generate XML exports of your projects, or provide MigryX with read-only access to your Work Repository database. Include all projects, folders, KMs, load plans, and topology definitions.

- Upload to MigryX: Upload the XML exports to the MigryX platform. The system automatically parses the repository structure, identifies all artifacts, and builds the dependency graph across mappings, packages, and variables.

- Review the automated conversion: MigryX generates the complete dbt project (models, sources, tests, profiles), Airflow DAGs, and a detailed conversion report. Each artifact is flagged as fully automated, automated with warnings, or requiring manual review.

- Refine custom KMs manually: Focus engineering effort on the flagged items — custom KMs with PL/SQL, Oracle-specific function calls, and complex error-handling patterns. MigryX provides stub code and mapping suggestions to accelerate this work.

- Validate with a parallel run: Execute both ODI and dbt pipelines against production data. MigryX generates the comparison queries automatically. Resolve any discrepancies before cutover.

- Deploy to Airflow: Deploy the generated DAGs to your Airflow environment (Amazon MWAA, Cloud Composer, or self-managed). Configure connections, variables, and alerting. Monitor for two full business cycles before decommissioning ODI.

The entire process — from export to production deployment — typically takes 6–12 weeks depending on the size and complexity of the ODI estate. Organizations with fewer than 200 mappings and standard KMs are on the shorter end. Organizations with 500+ mappings and heavy custom KM usage are on the longer end.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to migrate your ODI estate to dbt + Spark SQL + Airflow?

Schedule a technical deep-dive where we’ll analyze your ODI repository and show you the automation potential for your specific workloads.

Schedule a Technical Deep-Dive